Overview

Within the IntelligentSecurityLab, we model, analyze and solve a wide range of problems in which secure decisions must be made in noisy and adversarial environments. We utilize rigorous tools from decision, control, optimization and game theory to model systems and utilize Machine Learning to obtain potent defense (and attack) strategies.

AI-Driven Defense

Applying ML and stochastic control to create adaptive security defense strategies.

Adversarial Modeling

Exposing vulnerabilities and identifying stealthy attack vectors on systems.

Prototyping

Building proof-of-concept libraries and applications for automated decision making.

Current Projects

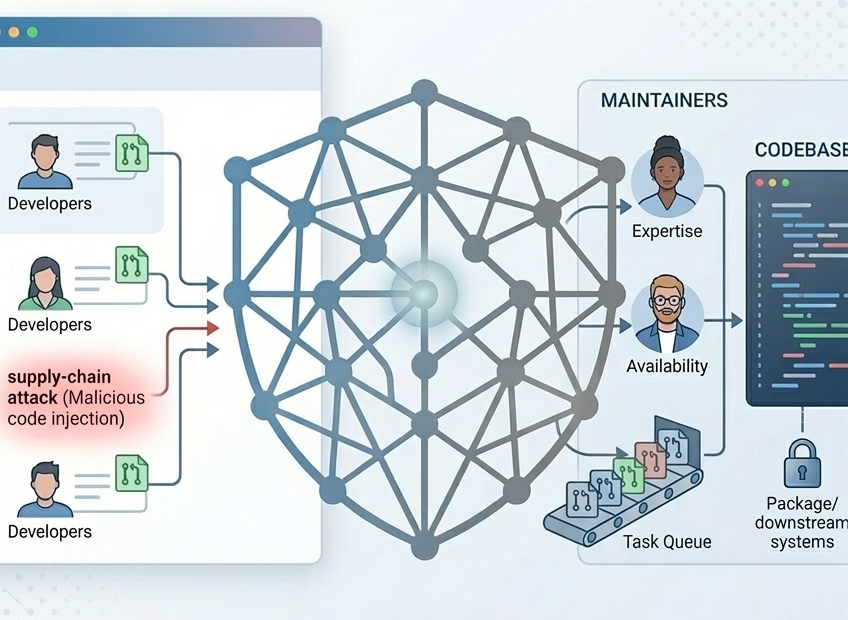

Securing Supply Chains in OSS

This project applies game theory and deep reinforcement learning to protect open-source software from supply-chain attacks hidden in pull requests. By modeling the interaction between attackers and maintainers as general-sum games, the project develops potent strategies that optimize pull request assignments to prevent malicious code injection.

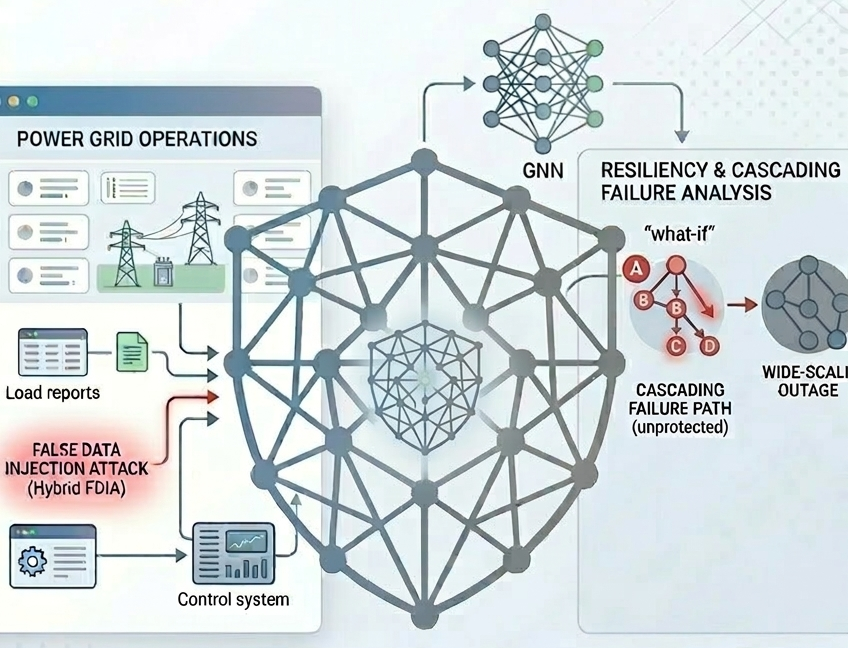

Resliency in Smart Power Grids

This research advances power grid resiliency by developing Graph Neural Networks to predict cascading failures and modeling Hybrid False Data Injection Attacks (FDIA) against third-party aggregators. By combining topological failure analysis with the study of adversarial data manipulation, the framework identifies critical vulnerabilities and evaluates tradeoffs between safety margins, energy storage, and operational stability.

Previous Projects

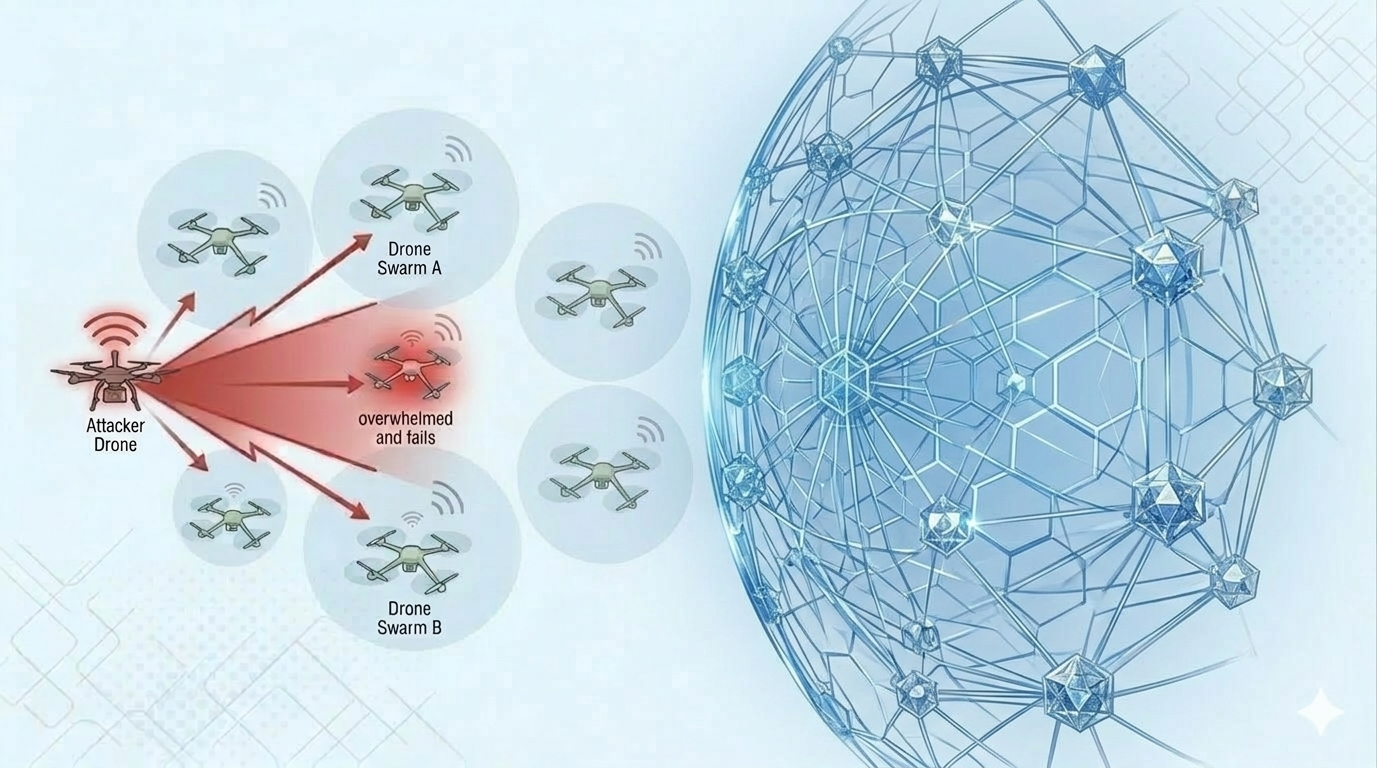

Securing Cyber-Physical Systems

We focus on protecting Cyber-Physical systems within various applications such as intelligent transportation systems, manufacturing and multi-agent systems. Our approach relies on game-theoretic models that we solve using reinforcement learning methods.

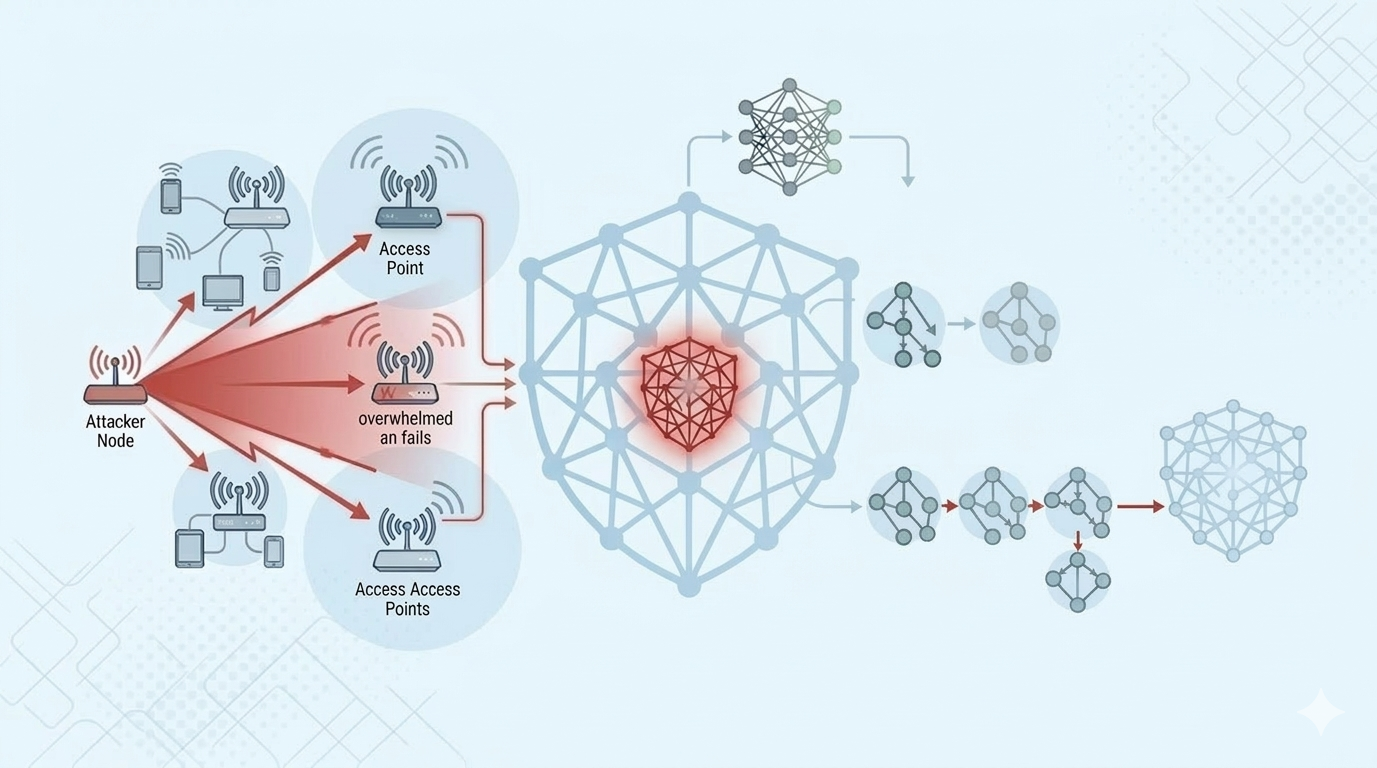

NSF CAREER NSF SaTCProtecting Wireless SDN

In this project we study the susceptibility of dynamic channel allocation methods -- commonly used in Software Defined Networks (SDN) -- to stealthy jamming attacks. Through Markov Decision Process and game-theoretic frameworks we were able to expose potent attacks and develop defense mechanisms that mitigate their impact.

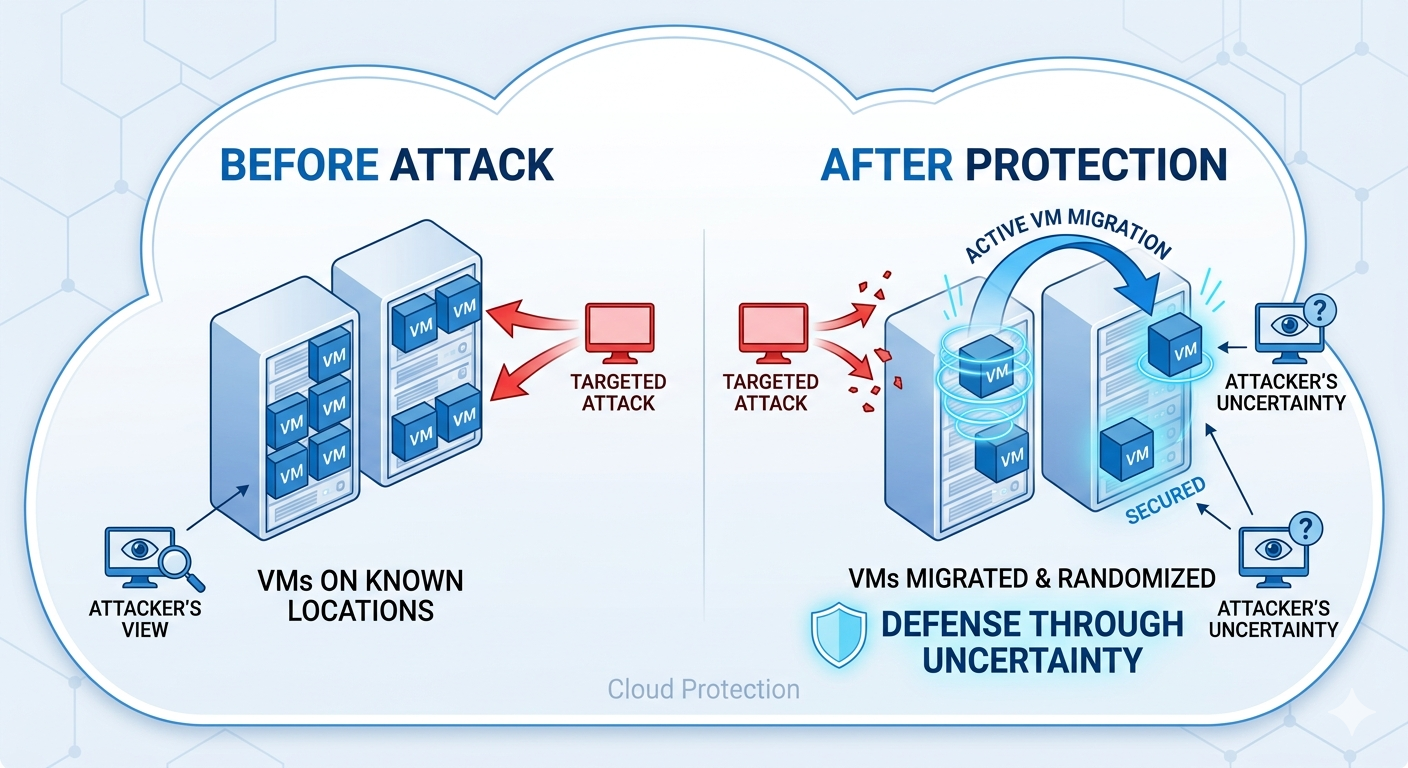

Securing Cloud Infrastructure

Security and privacy in cloud computing are critical components for various organizations that depend on the cloud in their daily operations. Customers’ data and the organizations’ proprietary information have been subject to various attacks in the past. In this project, we develop a set of Moving Target Defense (MTD) strategies that randomize the location of the Virtual Machines (VMs) to harden the cloud against attacks.

AFRLPeople

Mina Guirguis

Javad Koushyar

Hoang Duc Bao Vo

Former Graduate Advisees (M.S. Thesis) | First Appointment

- Alireza Tahsini (2019) — “BLOC: A Game Theoretic Approach to Orchestrate CPS Against Cyber Attacks” | Paycom

- Noah Dunstatter (2019) — “Solving Cyber Alert Allocation Markov Games with Deep Reinforcement Learning” | SwRI

- Terry Penner (2016) — “Bandits in the Cloud: A Moving Target Defense Against Multi-Armed Bandit Attack Policies” | IBM

- Lavanya Tammineni (2015) — “Local Overlay-based Mobile Clouds”| HP

- Janiece Kelly (2014) — “Effect of an Interloper Attack on Dynamic Channel Assignment” | GM

- Trevor Hanz (2013) — “An Abstraction Layer for Controlling Heterogeneous Mobile Cyber-Physical Systems” | UT Applied Research Laboratories

- Emad Guirguis (2011) — “On the Effect of Jamming Attacks on Cyber-Physical Systems with the Focus on Target Tracking” | Intel

- Jason Valdez (2008) — “Providing Soft Quality of Service via TCP Congestion Signal Delegation on Aggregated Flows”| Texas Legislative Council

Additional Former Members & REU Students

Clesstian Matala (LREU - U. of Houston), Darryl Balderas (IBM), Vu Nguyen (GM), Michael Ruth (REU - U. Buffalo), Brandon Van Slyke (IBM), Seth Richter (REU - LeTourneau), Agustin Rivera (General Motors), Sheryl Rosenthal (REU - Texas State), Daniel Haller (REU - U. Maryland), Alison Johnson (REU - Texas State), David Reynolds (Microsoft), William Johnson, Nikhil Halkude, Hether Hinze (REU - Texas State), Joseph Valdez, Bassam El Lababedi, Charles Tewksbury, Christopher Page, Christian McArthur, Jason Valdez, Hideo Goto, Joshua Tharp.

Outreach and lab activities

Sponsors

Industry & Agency Partnerships

We look forward to partnering with industry, local, and federal agencies on mutually-interesting research-oriented projects geared towards cybersecurity, optimization and data analytics.

Interested in AI and Security?

We are seeking motivated students to join our lab.